A customer recently sent in an a gear SAE 50 lube oil sample from a rigid dump truck working on a UK quarry. The machine had previously had no issues and had a very thorough and complete service history with all the maintenance performed by the official OEM (Original Equipment Manufacturer) dealer. All the lubricants used were with a lube oil major supplier and had full approvals for the machine. The customer was also understood to use the official OEM telemetry systems to aid in early fault diagnostics of which no concerns were identified, as well as using the OEM preventative maintenance (replace before failure) schemes to ensure parts lifespans were not over-extended.

It is fair to say that the customer was doing everything possible to maintain the machine in a good condition and avoid premature failures.

The customer received free oil analysis with some of their operation, maintenance and service contracts from the dealer through the OEM preferred lab, but in addition to this the customer also regularly sent samples to their own choice of lab (Oil Analysis Laboratories – OAL) for an independent analysis. The dealer was aware of this and often they would fill both the sample bottles at the same time for the client during a service to save on repeat stoppages to sample.

The OEM had wear rate per hour limits, which based on the customers usual drain intervals the sampling point had limits for iron of approximately 250ppm as a minor caution, 400ppm as a serious and 800ppm as critical. The OEM also had limits for magnetic particles – PQ (Particle Quantification) and equivalent tests of 150, 200 and 350 for caution, serious and critical respectively.

“The sample showed visible metal chunks in the oil”

Upon arrival at OAL the sample was observed to have considerable visible metallic particles. The ferrous debris (a similar technology to the PQ instrument, but actually having units of ppm rather than no units) value was 82ppm. The standard ICP wear element iron that most labs use was 3ppm (as it struggles to detect particles larger than 5ppm), but the acid digestion technique that detects all the particles – LubeWear – detected 431ppm of iron. The ratio of standard ICP to LubeWear was well over 100 meaning there was a large skew towards abnormally large particles that needed addressing.

So even though the standard iron and magnetic properties of the oil said the wear was within OEM limits, the analysis findings using LubeWear showed otherwise.

Surely seeing metal would be an issue?

Unfortunately not all labs perform a visual inspection of the sample or if they do they do not do it on darker coloured oils such as dark gears or engine oils. I personally find this a big mistake and hence why every sample in my own lab, I built in that appearance photographing using very high definition cameras of every sample is standard practice. I also ensure we use containers that are fully transparent not just translucent or even opaque containers so every spec can be visually inspected.

Why isn’t PQ detecting all the iron?

PQ is a great technology for detecting magnetic particles in the oil, but for completeness we should use the term ferrous debris as PQ is a brand name (like hoover rather than vacuum cleaner or Coke when you mean cola) rather than the technology itself. PQ is a unitless figure of the number of magnetic particles, but other ferrous debris methods provide values in ppm too for added accuracy, such as the instruments we use in our lab. However, the problem with PQ is also its greatest benefit in that it detects only magnetic particles. So Aluminium or Copper for instance it can’t see. Equally, iron is only magnetic in certain states i.e. usually as pure iron. However iron quickly rusts in presence of moisture and air and this is not magnetic. Equally exposed iron particles react with lube oil sulphurous antiwear additives forming iron sulphide, which is also not magnetic. Hence ferrous debris / PQ or whatever else you want to call the technology still can’t detect everything in its sweet spot of iron. In this case the iron was in mixed states and hence only some of it was magnetic and detectable by its magnetic properties.

What was the cause of the abnormal wear?

There were no obvious contaminants such as dirt or water to be the cause of abrasive wear. Instead it was something more fundamental with the lubrication mechanism. The answer came from what is the most important property of a lubricant – its viscosity. As mentioned earlier the grade in use was supposed to be an SAE 50 (defined as a viscosity between 16.3 and 21.9 mm2/s at 100’C). The sample had a viscosity at 100C of 16.9 mm2/s so was within grade, but what was more important was the analysis at 40’C in which the new oil has a viscosity of 205mm2/s.

“We knew the viscosity was low. We now needed to work out why. “

In contrast the sample received showed a viscosity of 175’C showing it was lower than expected despite being still in grade at 100’C. Although there is no fixed limits for viscosity at 40’C for automotive SAE grades of lubricant like at 100’C, 40’C because of the larger numbers being dealt with it gives a better indictor of viscosity changes compared to viscosity at 100’C. We knew the viscosity was low. We now needed to work out why. The most likely sources included solvents such as fuel and wrong oils such as hydraulic oil. The lubricants used were predominantly UTTO oils so the additive packages were very similar, so the change in additive package could not be used to determine the effect.

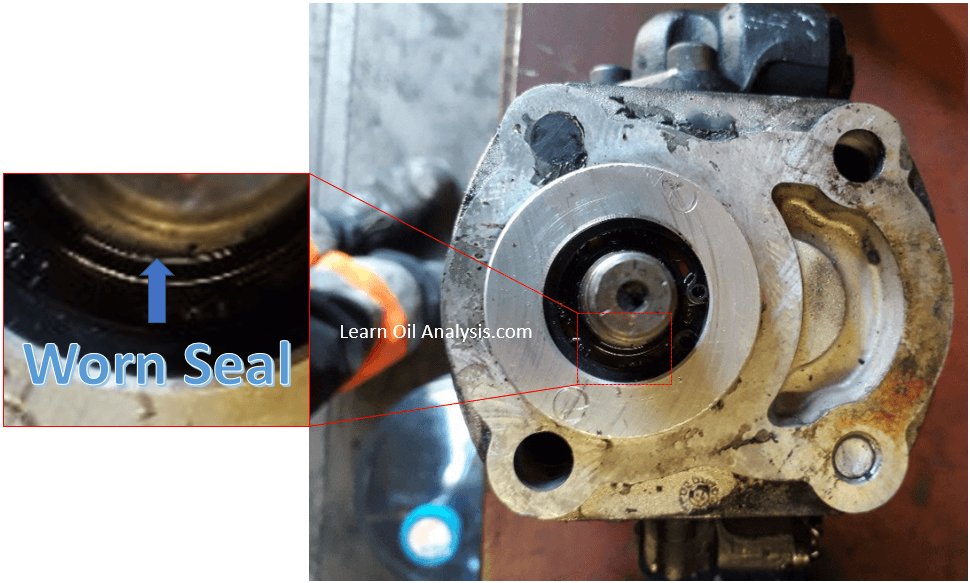

The lab telephoned the client and explained the situation. It was confirmed the lubricant storage systems were clearly labelled so it was unlikely someone had filled the system with the wrong oil and it was more likely a seal leak. The client added some of the OEM branded leak check dye fluid (coloured blue) and within 24 hours it was found hydraulic oil was leaking into the system. The cause was a worn seal (see photo below where you can see there is a shadow between the seal and the shaft at 6 o’clock showing the seal integrity had been lost as it should be tightly fitting the shaft). The customer was happy, a failure had been averted and began speaking to the OEM about replacing the seals.

What about the OEM lab sample – did they detect the problem too?

A couple days later the customer telephoned to say the report (remember they took samples at the same time) from the OEMs lab had come back normal with only 5ppm of iron (similar to the standard ICP value obtained by OAL) and 60 for PQ, meaning had it not been for the customer using OALs service including LubeWear a serious seal leak causing abnormal wear would have been missed. Based on the sampling interval and rate of hydraulic oil ingress the system could have potentially failed before an alarm ever was picked up by the standard analysis.

Some may be skeptical that the OEM may want the machine to fail to sell more parts and that’s why the result came back normal. This is a little unfair in that the OEM also used an independent lab too so there was no question of impartiality. Equally the machines still had a long extended warranty so it would have been in the OEMs interest to resolve the issue quickly to reduce their costs. Instead it was the fact that the laboratory was using outdated methodology (as do most labs) to detect wear. The fundamental flaw for the last 30 years that standard elemental analysis only detects normal wear and misses the abnormal stuff hasn’t been addressed. Acid digestion a technology known about for decades, but really updated to today’s needs and used by the LubeWear technique instead detects all the wear. The customers words were quite adapt I think in describing this. “Normal oil analysis tells you that your machine is failing, whereas Lubewear tells you before it starts to.”

“Normal oil analysis tells you that your machine is failing, whereas Lubewear tells you before it starts to”

If you would like to find out more about fluid analysis and how there are better ways to prevent failures using updated methodologies feel free to contact us by clicking the blue icon at the bottom right of the screen.