This article will answer the following questions:

- Why traditional oil condition monitoring services have been for years missing abnormal wear associated with failures?

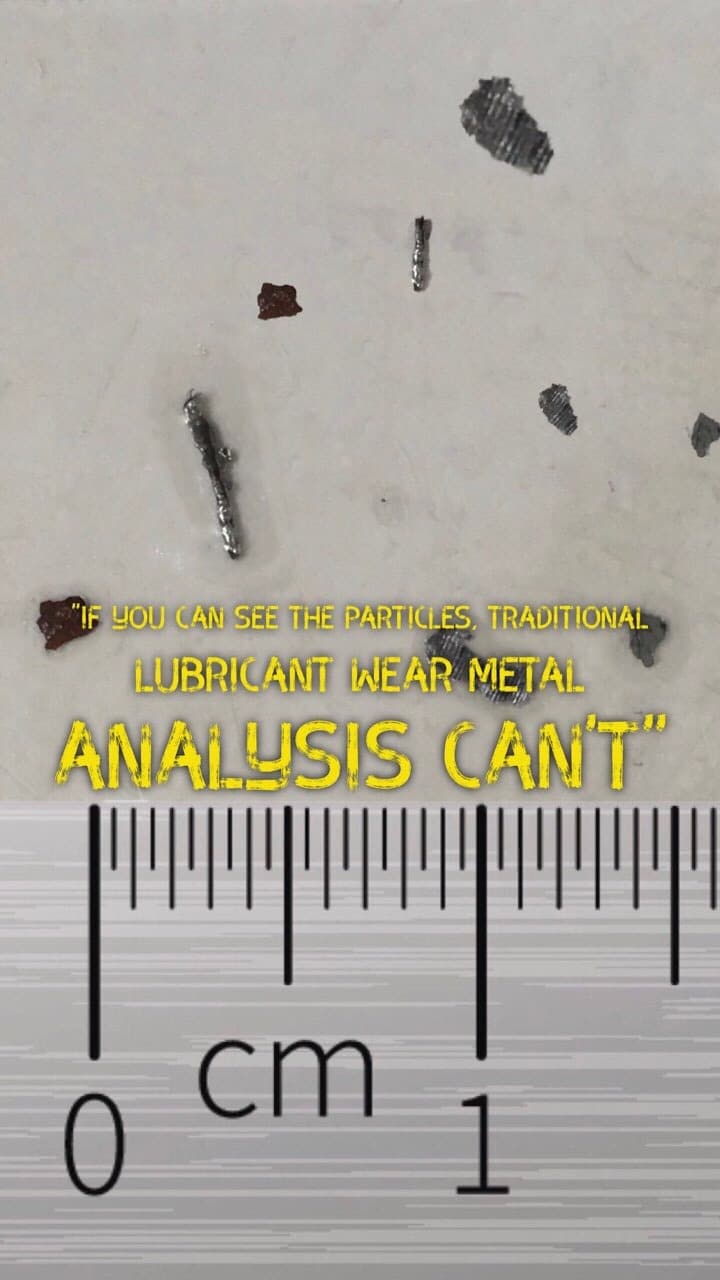

- Why if you can see a wear particle traditional lab analysis cannot?

- Why grease elemental analysis has traditionally been poor at giving consistent and repeatable results?

- What are the alternatives that have been tried to give customers the true answer of wear in their oil and grease samples?

- What is this new technique called “LubeWear” that has now solved the problem of poor wear metal analysis that has plagued the industry for years?

The traditional approach to wear metal determination

Each year millions of lubricant samples are analysed by thousands of laboratories across the world helping customers prevent machinery failures and save money. These laboratories are often reputable with high levels of accreditation, undergoing strict quality control processes and participating in round robin schemes to confirm their data shows no evidence of bias.

Almost all condition monitoring labs use ICP (Inductively Coupled Plasma) or Ark and spark methods to measure the wear elements in your samples. These are tried and tested methods and the quality control data would tell you they are exceptionally accurate methods.

You may have guessed that there is a “BUT” coming and that relates to a fundamental flaw in these technologies. These methods are blind to anything over 10 microns. Considering a grain of sand is approximately 70 microns this means if you can see the wear in the sample bottle you can be confident the traditional wear metal analysis methods cannot and will fail to catch it.

How do traditional methods of detecting wear metals work?

Atomic emission spectroscopy includes ICP and RDE instruments. Both these methods work on the principle of vapourising the wear particles so that the individual elements are excited.

Rotating disc electrodes (RDE) work by rotating a disc electrode submerged in the lubricant sample. The lubricant sticks to the disk and is carried to the top where a graphite rod is in very close proximity to the rotating disk. At the gap between the rod and the disc an electrical arc (hence it also has an ark and spark nickname) is passed across the gap causing the material between the gap to be vapourised. RDE is great method for field testing as requires minimal solvent and no argon supply, is portable and are fairly easy to use.

The other main technology is called Inductively Coupled Plasma (ICP). In ICP the sample is passed through a spray chamber that produces a nebulised mist of sample to be carried through to a plasma flame to be vapourised. The flame itself is produced by high radio frequency waves on the inert gas argon and reaches temperatures hotter than the surface temperature of the sun. ICP is really the method of choice that all major laboratories use to determine lubricant elements. It’s exceptionally accurate on small particle sizes and dissolved elements.

The vapourising in both methods leads to the wear elements becoming excited. After the elements are excited they return to their ground state by losing energy as a wavelength of light. This wavelength of light is specific to the individual elements present in the sample, whilst the intensity of light emitted is proportional to the concentration.

This is all great, but as already mentioned they struggle with larger particles.

Why do traditional ICP and Ark and Spark methods miss large particles?

To explain this simply I first need to do a slight diversion into the realm of cookery. Last night my wife and I made a delicious chicken dish in which chicken breasts went into the oven at 200’C for 25 minutes and came out with a golden crispy skin. Despite the golden exterior we still cut into the chicken breast to make sure it’s cooked in the middle. It was. However if I had cooked the whole chicken then after half an hour it would most certainly still be raw.

So why the difference? The only significant difference between the chicken breast portion and a whole chicken is the size. The thicker the layer of meat for the heat to penetrate the longer it takes to cook the centre. If I was to dice the chicken portions into thin strips the chicken would be cooked even quicker because there is more surface area of meat in contact with the heat from the oven.

If we now return to wear particles that are in the plasma flame or arc discharge for a short period of time, it may now make sense that larger particles are hugely underrepresented because they are not completely vapourised and measured. In essence you can think of it as there are “uncooked” large wear particles left behind.

Size indeed plays other roles in causing under representation beyond simply not being vapourised as outlined below.

Why large wear never reaches the point to be vapourised with traditional methods?

If you picture the RDE method, if you have a relatively thin oil, the large particles will keep dropping to the bottom of the sample container and be less easily carried to the arc at the top than smaller particles. You may be forgiven for thinking this means with thicker oils the problem goes away. True, the thicker the oil the better they keep the the particles suspended before dropping to the bottom, however, the wear particles tend to be larger in thicker oils which are used in gears than thinner oils like hydraulics, so the problem still occurs with the most severe wear forms. With solid greases the problem comes in that the sample is not homogenous so although a particle may be held in position better its significance is questionable.

Hence both these problems mean wear becomes underrepresented as less large particles reach the arc.

With ICP there is a spray chamber that produces a fine nebulised mist of lubricant that travels up to the plasma flame. However not all the sample is able to become mist and large droplets actually fall to waste without ever reaching the flame. This is normally not an issue for smaller particles as the concentration reaching the plasma is still proportional to the original concentration. However, with larger particles the tendency to not form a stable mist is more likely.

The large particles dropping to bottom due to viscosity effects as described in arc and spark plays even more of a factor with ICP as the oils are diluted in solvents, usually white spirits before processing. This means the fluid is very thin and anything not dissolved will start to drop out quickly in the sample tube before the autosampler begins to suck up sample into ICP to measure the particles. So in addition to the spray chamber losing smaller particles, another issue is the large particles never being drawn up in the first place. With greases and certain group V synthetic lubricants the problem is worse as these are not very soluble in white spirits and it’s close alternatives, meaning rather than a nice and transparent homogeneous sample going onto the ICP you end up with a murky looking very non-homogenous one. This means the data is very inaccurate that is produced and often the data will be expressed as e.g. a 5% dilution by the lab to hide the error that would be seen when multiplying up to the original dilution.

Why large wear is underrepresented when it reaches the point to be vapourised with traditional methods?

Even when particles reach the location to be vapourised because they spend such a short amount of time exposed to the high temperatures (usually only a few seconds) the size of the particles becomes a factor. Since both methods require the sample to be vapourised to measure, larger particles cannot be measured fully as not enough energy is present to vaporise them completely. Returning to the cooked chicken anology, the outside is ‘cooked’ (vapourised) and the inside is still ‘raw’ on the large particles, but the small particles like the diced chicken example have a much larger surface area and are completely vapourised. Hence large particles are under represented as only part of the particle is measured. ICP detection usually begins to decrease above 5 microns and ark and spark above approx 10 microns. Both much smaller than the classification of abnormal wear of being >15 microns.

Why does this matter?

Well abnormal wear that occurs when there is a serious fault in the machinery and is usually greater than 15 microns and can be up to a several millimeters in size depending on the severity. This means abnormal wear is underrepresented and failures can be missed.

I for a long time thought it’s just a limitation you have to work with with wear metal analysis and as a diagnostician I would look for trends such as ferrous debris / PQ rising, but elemental Iron was not to identity abnormal size trends in wear. This has the limitation that it assumes iron is in a form where it is magnetic which is not always the case e.g. rust is not magnetic. Equally not all wear is iron based and so aluminium, copper, lead and all the other wear elements still have the problem.

So how do you measure the elements accurately?

To truly measure the wear elements concentration you really need to get the particles down to less than 5 microns. This in reality involves a process called digestion. Traditionally this has been through use of acid digestion of sulphated ashes of the lubricants. This gives very accurate data as it turns the oil to an ash in a furnance and then you can dissolve this up in an acid, and is a well known technology in the industry. Another advantage is low levels of detection can also be achieved with acid digestion since low concentration aqueous ICP standards are readily available and much easier to obtain than low level oil standards. This is because low level calibration standards are a must for e.g. drinking water testing labs where there are maximum legal metal concentrations for drinking water and hence are worthwhile for manufacturers to produce low level standards even into the parts per billion range.

BUT, because the process is so long winded and involves days of man time to perform all the various steps to achieve a precise and accurate end result, it drives the cost of performing such analysis to research rather than commercial lab budgets where the end customers don’t want to pay 5 to 8 times their usual oil and grease analysis price to receive this in-depth testing. Equally because it’s so long winded to perform, 24 to 48 hour turnarounds offered by most labs turn into 5 day turnarounds.

Generally the use of such a method has been on post failure analysis investigations where I used to regularly have this performed on filters to determine the metallurgy of wear metals trapped within.

With greases where traditional elemental analysis such as ICP and AA is so poor at giving a correct answer, one of my fellow lube analysis working group members when working for a large maritime classification society wrote a paper in 2001 for a popular lubricants magazine focussed at the marine industry. In it he highlighted that traditional methods of analysing greases were poor and the preferred method was always to use sulphated ash. As I understand, this didn’t bring about a rush of customers looking for the digestion method despite producing far more accurate results. The reason being, the increased lead times to get the results and increased cost outweighed the benefits of the method in the customers view.

Lots of modifications have been made to digestion methods including early forms of microwave digestion, for a time these showed a lot of promise of faster preparation times, but the use tended to be more focussed at running a dozen or so awkward samples that couldn’t be run normally than being a mainstream test.

So despite the traditional digestion methods giving the best results for wear concentrations, the time and cost makes them prohibitive for a routine sample analysis.

Another hurdle to overcome even if digestion processing can be improved is that you lose the information about particle sizes. So you may have high iron but not know is it mainly small normal rubbing wear particles or large abnormal wear chunks.

So what are the alternatives?

Filtration spectroscopy

At least on the lube oil side if not applicable to the grease solid materials is filtration spectroscopy which uses an interesting modification to the arc and spark methods to capture large particles within the graphite electrode which was found to be porous. This is indeed an improvement on the basic RDE type systems, but the flaw with this technology is you lose the small particles which are filtered away by the solvent washes. Hence smaller particles are lost, skewing the analysis data in the other direction. In terms of processing original problems with the technology included no quantitative gravimetric standards (i.e. you can only provide ratios of elements rather than exact concentrations), variations in disk porosity giving poor repeatability and difficulty in processing sooted samples. For the most part at time of writing many of these limitations are beginning to be overcome such as using thinner wall electrodes, but this creates new limitations as with any filter process. I.e. the less depth of filter media a particle has to pass through, the more likely a particle that is not uniformly shaped (e.g. cutting wear that is long and thin) could navigate its way through without getting trapped.

X-Ray Fluorescence (XRF)

XRF works slightly differently to ICP and RDE in that the sample is not destroyed during testing, but the principle of exciting the electrons to emit light is the same. XRF works by shining X-Rays onto the sample (instead of using a plasma flame or an electrical arc) to excite the electrons. As atoms return to their ground state they emit light that can be detected.

At first glance XRF seem like the solution as it works on solids too. Many of the larger labs or new oil testing labs have these instruments and use them for detecting elements such as sulphur or chlorine as well as certain additive concentrations in oil company QC labs. In terms of price to buy, they often are cheaper than an ICP to purchase and have little or no solvent costs, so you might be thinking why is this not the default method of analysing elements and forget about ICP.

Well the problem comes from the limits of detection, i.e. the smallest concentration of an element that can be guaranteed to be a result and not noise of the instrument. XRF works best on heavy elements i.e. elements half way to the bottom end of the periodic table. However in terms of oil analysis the metals are towards the lighter end and middle of the periodic table. This means you need to have hundreds if not thousands of ppm of an element to be able to detect them.

The typical limits of detection from one instrument manufacturer are as follows:

Magnesium – 1000ppm

Aluminium – 190ppm

Silicon – 170ppm

Phosphorus – 100ppm

Sulphur – 20ppm

Chlorine – 20ppm

Iron – 5ppm

Naturally there is variation depending on the type of instrument purchased so some of the higher end instruments will perform better, but the general issue with lighter elements still exists to some degree with all the XRF technologies. This is because even if an instrument manufacturer can quote much lower limits of detection, this is based on dissolved elements that are small in size. With larger elements the classic problem of large particle sizes becomes a problem which I will explain below.

To detect elements by XRF you need to not only get the X-rays into the wear particle, but also the light needs to come out again to be detected. As you know if you have ever had a broken bone a thin layer of lead in a cloth placed over vital organs can prevent X-ray penetration completely and much thinner layers begin to reduce the level of penetration partially. It is generally accepted that at best the penetration for XRF can be up to 1000 microns (1mm) on the heaviest elements, but on the lighter elements including vital wear metals such as Aluminium the analysed area penetration is only a few microns.

So larger wear particles will be under represented with all elements, but because of the nature of the technology, i.e. working better with heavier elements the lighter elements will be worst affected.

Therefore the need to digest the particles to make them smaller in size is needed with XRF too. Hence XRF’s place tends to be in measuring percent rather than ppm concentrations of particles and it works best on dissolved elements such as additives. Hence why it is commonly used by new oil laboratories to determine additive concentrations where there will be no wear particles as it’s unused oil.

So with all the technologies discussed so far, although each has its own distinct advantages and disadvantages the core issue of particle size exists and none of these give the true wear metal concentrations in the sample and can be potentially failing to catch failures.

So how to achieve the right answer whilst still achieving fast turnaround and low pricing?

This brings us full circle to the acid digest dilemma, as the only true way to achieve the correct concentration of wear elements is to take particle size out of the equation. The only way to do this is by making them all in the range they can be detected fully (i.e. less than 5 microns) which means the particles need to be dissolved. Acid digesting achieves this so the wear particles are much smaller, easily vapourised and hence fully detectable.

If we work on the principle that digesting can be done cost effectively and in a timely manner so that it could be offered routinely we still hit another crucial stumbling block. By digesting the particles you lose the information about particle size – how do you know if a value of 500ppm of iron is lots of small rubbing wear particles or fewer severe wear particles of a large size?

The only way to see this change is to really do a before and after digestion analysis, so that the larger particles are no longer under represented. If the values spike after the digestion this means the wear is of a larger size.

Until 2018 no lab was doing this, until Oil Analysis Laboratories stepped into the picture not offering this an optional extra, but as the core basis of analysis included in every sample. What’s more the test is available on next day turnarounds. They call this testing LubeWear. LubeWear achieves the true wear metal analysis picture both in terms of concentration and particle size. There is no compromise the customer has to make to receive this test, such as very high analysis costs or long turnaround times, and in fact it meets the potential wear metal analysis has been striving to achieve since it’s inception many decades earlier.

A brief video summary explaining the technology is explained below.

The beauty and proof of the technology is that the analysis LubeWear includes standard wear metal analysis you would normally have from other labs and places it side by side on the report with the digestion values so you don’t need to run side by side trials between labs, as every sample report is giving you evidence of a side by side trial of both methods, just in case you needed any more convincing.

To find out more visit LubeWear.com and to ask any questions about oil or wear metal analysis click the chat icon on the bottom right of this screen.